What are domestic chip manufacturers doing with the rapid landing of large models?

The turning point for 2022-2023 has arrived

What is the turning point for 2022-2023? It is the emergence of the AI big model that has turned the marginal cost of acquiring knowledge into a fixed cost. "Lu Qi, the founder and CEO of Qiji Innovation, said in a speech in April," It is important to remember that anything that changes society or industry is always a structural change. This structural change is often a type of large cost, from marginal cost to fixed cost

And for the first time in history, ChatGPT has 100 million active users within two months, and they can't even stop it. Why? Because it encapsulates all the knowledge in the world; Having strong enough learning and reasoning abilities; The scope involved is wide enough, the knowledge is deep enough, and it is easy to use.

Together, the critical point of the new paradigm of AI has arrived, and the turning point has already arrived.

Huge computing power gap

Since it is called a big model, it is obvious that "big" is inevitably one of its biggest characteristics. How big is it? Yang Lei, Product Director of Anmou Technology, stated that if a GPT3.5 model with over 100 billion parameter scales were to be saved, it would require 100GB of storage space, which is of course much larger than the on chip storage scale of 1MB SRAM inside a regular chip.

But is that the end of it? Obviously not yet, because people think 'violence works miracles', such as the new model reaching 300 billion parameters and still rising violently, and we can't see where the end is at the moment, "he said.

On the other hand, traditional AI algorithms, such as beauty, facial recognition, facial brushing, etc., all belong to the convolutional neural network (CNN) architecture. However, the core computation of the GPT model based on the Tranformer architecture is a large matrix graph, which is different from traditional CNN algorithms from the perspective of underlying computing types. It is suitable for traditional CNN or NPUs for speech processing and may not be adept at efficiently computing AIGC class models.

Zou Yan, President of Tiantian Zhixin Product Line, believes that for leading enterprises, the early GPT3 model would require approximately 10000 Yingwei GPUs, but GPT4 reached a parameter scale of 100 trillion, which may require 30000 to 50000 state-of-the-art GPUs to complete. For many followers in this field, it is inevitable that they cannot lose to top companies in terms of computing power, and even need to invest more in computing infrastructure to achieve catch-up.

Regarding the continuous increase in the size of large model parameters, I personally have a slightly different view. In Zou Yan's view, one of the reasons is that the industry has not yet fully explored the performance potential of large models. The current large models are only a starting point, and leading enterprises hope to seize the undiscovered high ground of capabilities, so they continuously increase the parameters of general large models to develop new features; The second reason is that as the large model continues to iterate, it is impossible for so many computing investments to truly generate benefits. He personally judges that in the next 1-2 years, many repetitive investments will see a convergence stable threshold.

The Key to the Application of Fully Functional GPU as an AI Large Model

Faced with the demand for large-scale computing workload in the future, major chip manufacturers are now laying out large-scale computer architectures. Zhang Jianzhong, the founder and CEO of Moore Thread, pointed out that efficiency and management ability are more important for improving performance than the computing power of a single chip - just like Tesla's battery is not composed of a large battery, but is composed of many small batteries combined, GPUs will also be the same. No one can create a huge GPU to run algorithms, but they can complete tasks by combining multiple GPUs, And improve efficiency and management capabilities. Therefore, we don't just need to pursue scale up, but rather scale out.

This is also our advantage, as there are thousands of cores in GPUs. The principle of combining multiple GPUs is also similar, involving various aspects such as space, storage, network, and scheduling

The United States step by step, the local key chip supply strategy how to establish?

The United States has been talking about "de-globalization" and "de-risk", in fact, it wants to kick China away in the context of globalization. Therefore, for China, the most …Will mechanical hard drives end in 2028?

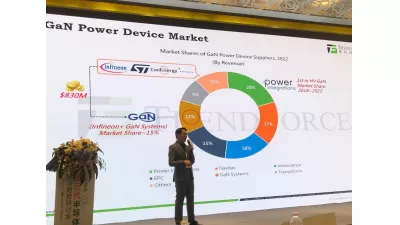

Pure Storage Vice President Shawn Rosemarin recently told the press that HDDS are expected to disappear by 2028 because of the continuing decline in the price of NAND per unit capacity, as well as the…What are the latest developments in SiC and GaN 'boarding'?

Although SiC has been widely used in automobiles, the cost of SiC devices is still too high for most cars due to yield and cost-effectiveness issues; The commercial use of GaN is concentrated in the f…Samsung Electronics showcases its new automotive technology strategy at Foundry Forum EU 2023

Since the development and mass production of the world's first eMRAM based on 28nm FD-SOI in 2019, Samsung Electronics has been promoting the development of AEC-Q100 level 1 applications based on …

- Top News

- HRE CSA Series Commercial Grade MLCC Capacitor Selection Guide

- HRE CIA Series Industrial Grade MLCC Capacitor Selection Guide

- HRE CAA/CAI Series Automotive Grade MLCC Capacitor Selection Guide

- FTR20D681K Varistor Applications and Technical Advantages: Providing Comprehensive Protection for Your Circuits

- Samsung Electronics showcases its new automotive technology strategy at Foundry Forum EU 2023